Neuromorphic information processing

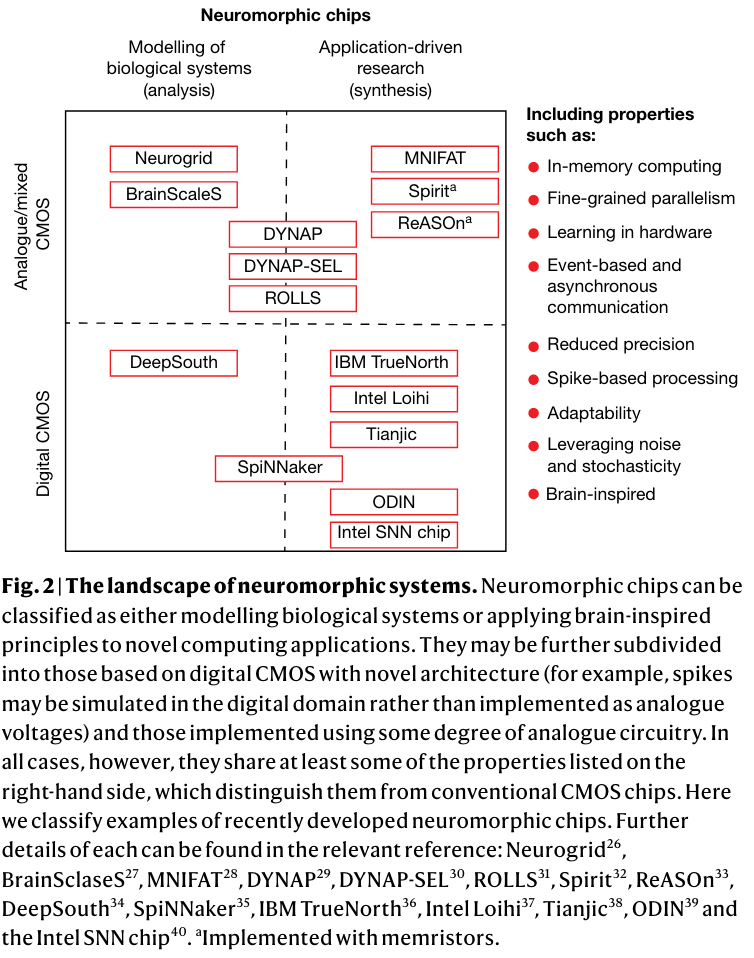

Let's step away from von Neumann architectures for a moment and think about new computing paradigms a la mortal computation to generate the software and hardware stacks for the ai future. these would involve, among other things.

- Co-located memory and compute, maybe removing the distinction between them entirely.

- Processors currently spend most of their time and energy moving data around.

- Massively more parallel and massively reduced power consumption relative to gpus.

- Truly plastic hardware using memristors instead of simulating plastic weights in exact silicon registers.

- Deep learning implements probabilistic models on deterministic hardware, sounds kind of dumb when you think about it.

State-of-the-art as of 2023/02/14

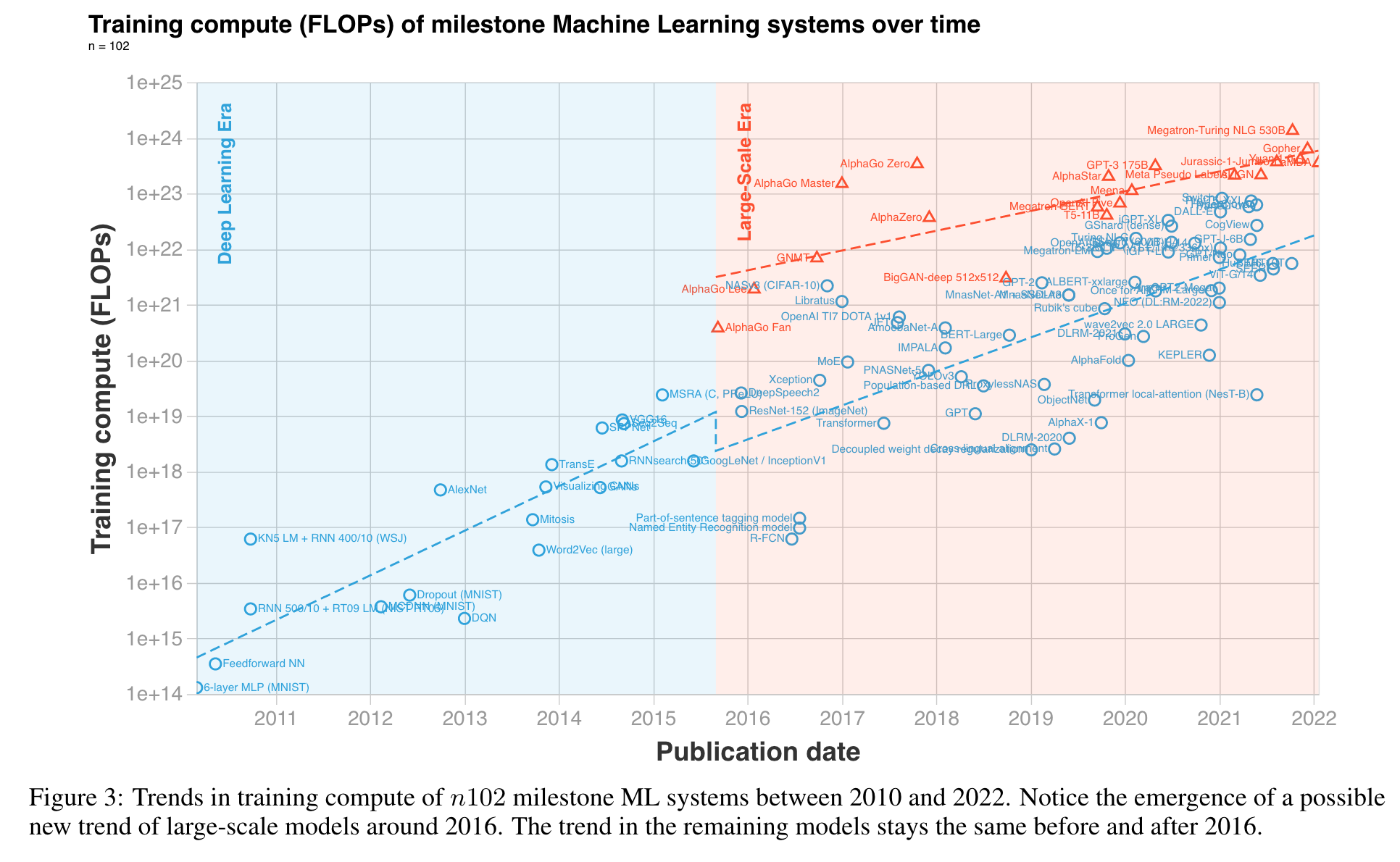

These systems are needed because we can't sustain current trends of power requirements for training ML systems. some indications that compute doubling times are already getting longer despite all the LLM hype:

Links