Predictive Learning

More specificially, latent predictive learning.

Overarching question

Does the brain use prediction in latent space as a learning objective?

Elevator pitch

Our brains adapt themselves to really summarize information about the outside world, because you’re never surprised that a car from the front looks different when you look at it from the side because there are all these details that you don’t typically care about. Our idea is actually that, as babies, we are surprised all the time that things look different when looking at them differently, but over time the brain’s connections change to keep reducing how much we’re surprised. I formalize this idea in maths and try to get a computer to learn in the same way. What we’ve seen is that in many surprising ways, it’s consistent with what we do know about how the brain works, so we think this approach is pretty promising.

Research rationale

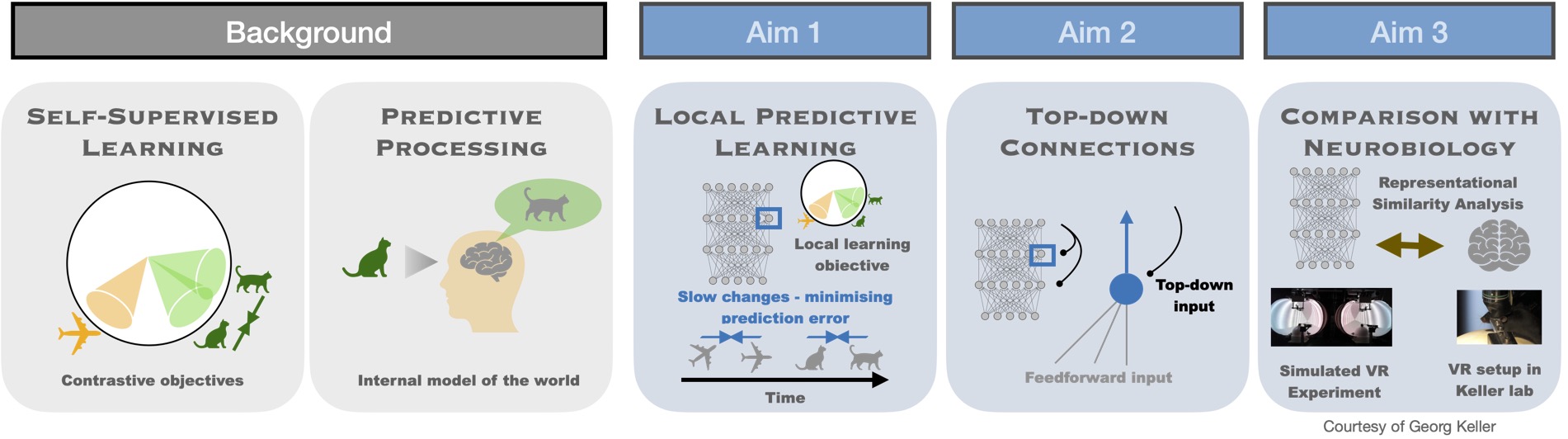

In order to answer whether the brain uses prediction as a learning objective, we first need to identify candidate learning objectives and learning rules that we could later evaluate with regard to biological relevance. Our strategy is to use deep artificial neural networks as a testbench for initially selecting candidate predictive learning objectives. This restricts our search space to objectives that are already known to be effective in unsupervised learning of representations in hierarchical networks, albeit in an artificial setting. Self-supervised learning objectives are very promising in this regard. Furthermore, several of these objectives are effective even when optimized in a layer-local manner without backpropagation. These yield local learning rules that can potentially be mapped to neurobiological learning rules, with comparisons on several levels - representations, learning rules, and architectural motifs.

However, several biological implausibilities are baked into the existing learning objectives, such as negative samples for instance, and we aim to eliminate these implausibilities while preserving the functional usefulness of the learned representations. Progress on this front has been rapid both in my research and the field in general, and further progress is expected throughout my Ph.D. studies.

This approach additionally offers the opportunity to explore functional roles for top-down connections in learning and inference and is an auxiliary research question that can be pursued in parallel.

Theoretical:

- Richards et al. (2019) - A deep learning framework for neuroscience

- LeCun (2022) - A Path Towards Autonomous Machine Intelligence Version 0.9.2, 2022-06-27

- Oord, Li and Vinyals (2019) - Representation Learning with Contrastive Predictive Coding

- Balestriero and LeCun (2024) - Learning by Reconstruction Produces Uninformative Features For Perception

- Levenstein et al. (2024) - Sequential predictive learning is a unifying theory for hippocampal representation and replay

- Recanatesi et al. (2021) - Predictive learning as a network mechanism for extracting low-dimensional latent space representations

Experimental:

- Leinweber et al. (2017) - A Sensorimotor Circuit in Mouse Cortex for Visual Flow Predictions

- Bellet et al. (2024) - Spontaneously emerging internal models of visual sequences combine abstract and event-specific information in the prefrontal cortex

- Gavornik and Bear (2014) - Learned spatiotemporal sequence recognition and prediction in primary visual cortex

Secondaries: